devops

Docker 101: Fundamentals & the Dockerfile

Containerize all project dependencies in one place for easy deployments anywhere.

Introduction

You, like me, may have heard of Docker before. You may have co-workers or developer friends who rave about it, who “dockerize” everything they can, and who are at an utter loss for words when it comes to explaining Docker, and all its glory, in terms that are understandable to the average person. Today, I will attempt to demystify Docker for you.

This will actually be a two-part series on Docker, because Docker needs, at least, two separate posts for its two best known products:

- Docker (and the Dockerfile),

- Docker Compose

So let’s begin at the beginning and I’ll explain what Docker actually is, in terms even I understand.

What is Docker?

The Docker website itself simply describes Docker as:

“the world’s leading software containerization platform” — Docker, Docker Overview

That really clears it up, right? Umm…yeah, not really. A much better description of Docker is found on OpenSource.com.

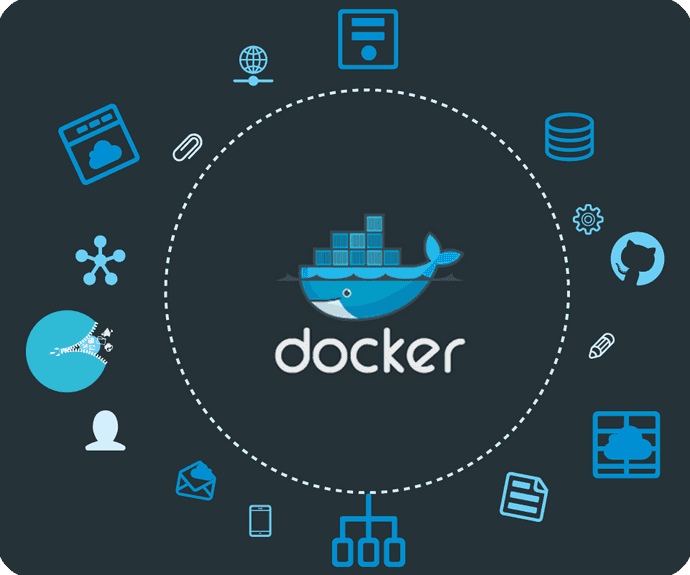

Docker is a tool designed to make it easier to create, deploy, and run applications by using containers. Containers allow a developer to package up an application with all of the parts it needs, such as libraries and other dependencies, and ship it all out as one package. — OpenSource.com, What is Docker?

What Docker really does is separate the application code from infrastructure requirements and needs.

It does this by running each application in an isolated environment called a ‘container.’

This means developers can concentrate on the actual code to run in the Docker container without worrying about the system it will ultimately run on, and devops can focus on ensuring the right programs are installed in the Docker container, and reduce the number of systems needed and complexity of maintaining said systems after deployment.

Which is a perfect segue to the next point: why Docker?

Why Docker?

I can’t tell you how many times I’ve heard a developer (myself included) say, “It works on my machine, I don’t know why it won’t work on yours.” — any developer, ever

This is what Docker is designed to prevent — the inevitable confusion that comes when a dev’s been working on their local machine on a project for days (or weeks), and as soon as it’s deployed to a new lifecycle, the app won’t run. Most likely because there’s a host of installed dependencies that are necessary to run the application but they aren’t saved in the package.json or the build.gradle or specified in the manifest.yml.

Every single Docker container begins as a pure vanilla Linux machine that knows nothing.

Then, we tell the container everything it needs to know — all the dependencies it needs to download and install in order to run the application. This process is done with a Dockerfile, but I’m getting ahead of myself.

For this section, suffice it to say, Docker takes away the guesswork (and hours spent debugging) from deploying applications because it always starts as a fresh, isolated machine and the very same dependencies get added to it. Every. Single. Time.

No environments that have different versions of dependencies installed. No environments missing dependencies entirely. None of that nonsense with Docker.

Docker Tools

Before I dive into the Dockerfile, I’ll give a quick run down of Docker’s suite of tools.

Docker has four main tools that it provides to accomplish tasks:

The Docker Engine is Docker’s

“powerful open source containerization technology combined with a work flow for building and containerizing your applications.” — Docker, About Docker Engine

It’s what builds and executes Docker images from either a single Dockerfile or docker-compose.yml. When someone uses a docker command through the Docker CLI, it talks to this engine to do what needs to be done.

Docker Compose

“is a tool for defining and running multi-container Docker applications” — Docker, Overview of Docker Compose

This is what you use when you have an application made up of multiple microservices, databases and other dependencies. The docker-compose.yml allows you to configure all those services in one place and start them all with a single command. I’ll cover Docker Compose in much greater detail in the follow up blog post.

In years past, Docker Machine was more popular than it is now.

“Docker Machine is a tool that lets you install Docker Engine on virtual hosts, and manage the hosts with docker-machine commands.” — Docker, Docker Machine Overview

It’s fallen by the wayside a bit as Docker images have become more stable on their native platforms, but earlier in Docker’s history it was very helpful. That’s about all you need to know about Docker Machine for now.

The final tool, Docker Swarm, won't be covered in detail. But for now, Docker Swarm

“creates a swarm of Docker Engines where you can deploy application services. You don’t need additional orchestration software to create or manage a swarm” — Docker, Swarm Mode Overview

These are the tools you’ll become familiar with, and now, we can talk about the Dockerfile.

The Dockerfile — where it all begins

Docker is a powerful tool, but its power is harnessed through the use of things called Dockerfiles.

A Dockerfile is a text document that contains all the commands a user could call on the command line to assemble an image. Using docker build users can create an automated build that executes several command-line instructions in succession. — Docker, Dockerfile Reference

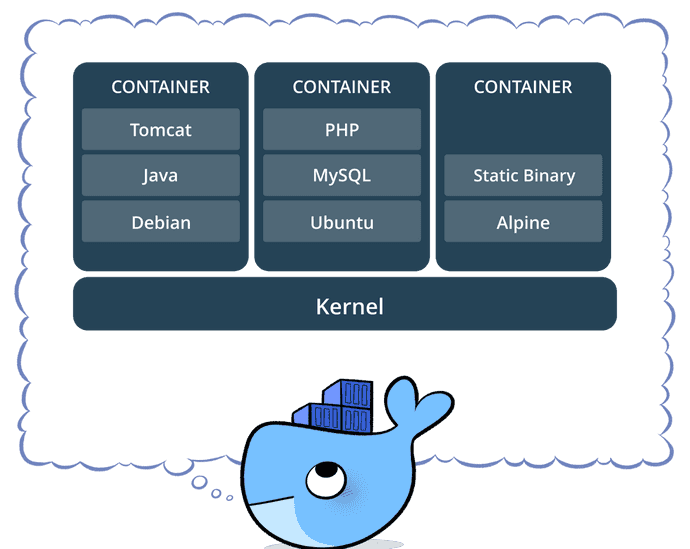

A Docker image consists of read-only layers each of which represents a Dockerfile instruction. The layers are stacked and each one is a delta of the changes from the previous layer.

This is what I was talking about above, when a Docker container starts up, it needs to be told what to do, it has nothing installed, it knows how to do nothing. Truly nothing.

The first thing the Dockerfile needs is a base image. A base image tells the container what to install as its OS — Ubuntu, RHEL, SuSE, Node, Java, etc.

Next, you’ll provide setup instructions. These are all the things the Docker container needs to know about: environment variables, dependencies to install, where files live, etc.

And finally, you have to tell the container what to do. Typically it will be running specific installations and commands to the application specified in the setup instructions. I’ll give a quick overview of the most common Dockerfile commands next and then show some examples to help it make sense.

Dockerfile Commands

Below, are the commands that will be used 90% of the time when you’re writing Dockerfiles, and what they mean.

FROM— this initializes a new build stage and sets the Base Image for subsequent instructions. As such, a validDockerfilemust start with aFROMinstruction.RUN— will execute any commands in a new layer on top of the current image and commit the results. The resulting committed image will be used for the next step in theDockerfile.ENV— sets the environment variable<key>to the value<value>. This value will be in the environment for all subsequent instructions in the build stage and can be replaced inline in many as well.EXPOSE— informs Docker that the container listens on the specified network ports at runtime. You can specify whether the port listens on TCP or UDP, and the default is TCP if the protocol is not specified. This makes it possible for the host and the outside world to access the isolated Docker Container.VOLUME— creates a mount point with the specified name and marks it as holding externally mounted volumes from the native host or other containers.

Ok, now Dockerfile commands have been defined, so let’s look at some sample Dockerfiles. If you want to learn more about Docker commands, I’d highly recommend the Dockerfile documentation — it’s very well written.

Dockerfile Examples

Here are a few sample Dockerfiles, complete with comments explaining each line and what’s happening layer by layer.

Node.js Dockerfile Example

# creates a layer from the node:carbon Docker image

FROM node:carbon

# create the app directory for inside the Docker image

WORKDIR /usr/src/app

# copy and install app dependencies from the package.json (and the package-lock.json) into the root of the directory created above

COPY package*.json ./

RUN npm install

# bundle app source inside Docker image

COPY . .

# expose port 8080 to have it mapped by Docker daemon

EXPOSE 8080

# define the command to run the app (it's the npm start script from the package.json file)

CMD [ "npm", "start" ]Java Dockerfile Example

# creates a layer from the openjdk:8-jdk-alpine Docker image

FROM openjdk:8-jdk-alpine

# create the directory for where Tomcat creates its working directories

VOLUME /tmp

# copy the project JAR file to the container renamed as 'app.jar'

COPY build/libs /app

# execute that JAR in the entry point below

ENTRYPOINT ["java", "-Djava.security.egd=file:/dev/./urandom", "-jar", "/app/java-example.jar"]Python Dockerfile Example

# creates a layer from the ubuntu:16.04 Docker image

FROM ubuntu:16.04

# adds files from the Docker client’s current directory

COPY . /app

# builds the application with make

RUN make /app

# specifies what command to run within the container

CMD python /app/app.pyJenkins Dockerfile Example

# creates a layer from the jenkins:lts Docker image

FROM jenkins/jenkins:lts

# sets user to root (because Docker always runs as root and Jenkins needs to know this)

USER root

# add and install all the necessary dependencies

RUN apt-get update && \

apt-get install -qy \

apt-utils \

libyaml-dev \

build-essential \

python-dev \

libxml2-dev \

libxslt-dev \

libffi-dev \

libssl-dev \

default-libmysqlclient-dev \

python-mysqldb \

python-pip \

libjpeg-dev \

zlib1g-dev \

libblas-dev\

liblapack-dev \

libatlas-base-dev \

apt-transport-https \

ca-certificates \

zip \

unzip \

gfortran && \

rm -rf /var/lib/apt/lists/*

# install docker

RUN curl -fsSL get.docker.com -o get-docker.sh && sh get-docker.sh

# install docker compose

RUN curl -L https://github.com/docker/compose/releases/download/1.8.0/docker-compose-`uname -s`-`uname -m` > /usr/local/bin/docker-compose && \

chmod +x /usr/local/bin/docker-compose

# install pip for python

RUN pip install cffi --upgrade

RUN pip install pip2pi ansible==2.0

# copy groovy executors and plugins for jenkins and run the plugins

COPY executors.groovy /usr/share/jenkins/ref/init.groovy.d/executors.groovy

COPY plugins.txt /usr/share/jenkins/ref/plugins.txt

RUN /usr/local/bin/plugins.sh /usr/share/jenkins/ref/plugins.txt

# add the jenkins user to the docker group so that sudo is not required to run docker commands

RUN groupmod -g 1026 docker && gpasswd -a jenkins docker

USER jenkinsThe commands aren’t really that hard or complicated, once you see the files broken down like this.

Images vs. Containers

The terms Docker image and Docker container are sometimes used interchangeably, but they shouldn’t be, they mean two different things.

Docker images are executable packages that include everything needed to run an application — the code, a runtime, libraries, environment variables, and configuration files.

Docker containers are a runtime instance of an image — what the image becomes in memory when executed (that is, an image with state, or a user process).

In short, Docker images hold the snapshot of the Dockerfile, and the Docker container is a running implementation of a Docker image based on the instructions contained within that image.

We clear? Cool.

Docker Engine Commands

Once the Dockerfile has been written the Docker image can be built and the Docker container can be run. All of this is taken care of by the Docker Engine that I covered briefly earlier.

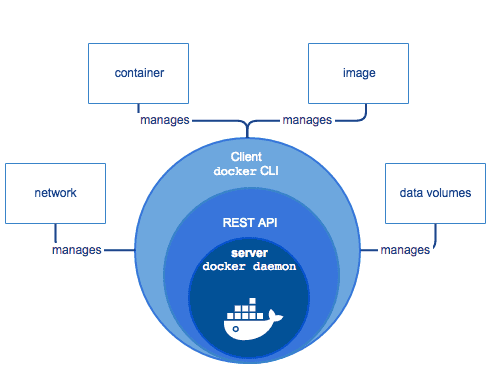

A user can interact with the Docker Engine through the Docker CLI, which talks to the Docker REST API, which talks to the long-running Docker daemon process (the heart of the Docker Engine). Here’s an illustration below.

The CLI uses the Docker REST API to control or interact with the Docker daemon through scripting or direct CLI commands. Many other Docker applications use the underlying API and CLI as well.

docker build— builds an image from a Dockerfiledocker images— displays all Docker images on that machinedocker run— starts container and runs any commands in that container- there’s multiple options that go along with

docker runincluding -p— allows you to specify ports in host and Docker container-it—opensup an interactive terminal after the container starts running-v— bind mount a volume to the container-e— set environmental variables-d— starts the container in daemon mode (it runs in a background process)docker rmi— removes one or more imagesdocker rm— removes one or more containersdocker kill— kills one or more running containersdocker ps— displays a list of running containersdocker tag— tags the image with an alias that can be referenced later (good for versioning)docker login— login to Docker registry

These commands can be combined in ways too numerous to count, but here’s a couple simple examples of Docker commands.

docker build -t user1/node-example .

This says to Docker: build (build) the image from the Dockerfile at the root level (. ) and tag it -t as user1/node-example. Don’t forget the period — this is how Docker knows where to look for the Dockerfile.

docker run -p 3003:8080 -d user1/node-example

This tells Docker to run (run) the image that was built and tagged as user1/node-example, expose port 3003 on the host machine and look for port 8080 inside the Docker container (-p 3003:8080), and start the process as a background daemon process (-d).

That’s how simple it can be to run commands using the Docker CLI. Once again, the Docker documentation is well done.

Conclusion & Part Two: Docker Compose

Now you’ve seen the tip of the iceberg of Docker. It’s a lightweight, isolated runtime environment known as a ‘container’ that you can spin up and configure just about anyway you like with the help of a Dockerfile.

Stay tuned for part two of this series where I’ll dive into the Docker Compose tool that lets you configure and run multiple applications with just one file and a single start command. It’s pretty darn cool.

Thanks for reading, I hope this gives you a better understanding of the basics of Docker and its power.

Further References & Resources

Want to be notified first when I publish new content? Subscribe to my newsletter.